The digital economy runs on data. Yet traditional web scraping methods—based on rigid scripts and manual intervention—struggle to keep pace with constantly evolving websites and dynamic content structures. The emergence of AI agents marks a pivotal shift in how organizations approach data acquisition. Instead of relying on static rules, businesses are increasingly adopting AI-powered web scraping to enable more resilient, scalable, and adaptive workflows.

At the core of this transformation lies intelligent web data collection, where systems interpret context, reason about objectives, and adjust to change autonomously. Unlike conventional tools that depend on hard-coded selectors, AI-driven data extraction empowers organizations to define outcomes rather than instructions. This transition represents more than a technical upgrade—it signals a strategic evolution in how companies leverage web intelligence for competitive advantage.

This research report explores the architecture, capabilities, advantages, challenges, performance benchmarks, and long-term implications of AI agents in web scraping, outlining how they are redefining the future of data-driven decision-making.

Traditional scrapers operate through predefined pathways. Developers identify HTML elements, set extraction rules, and deploy scripts that function as long as page structures remain unchanged. However, even minor layout updates can break these systems, requiring constant monitoring and maintenance.

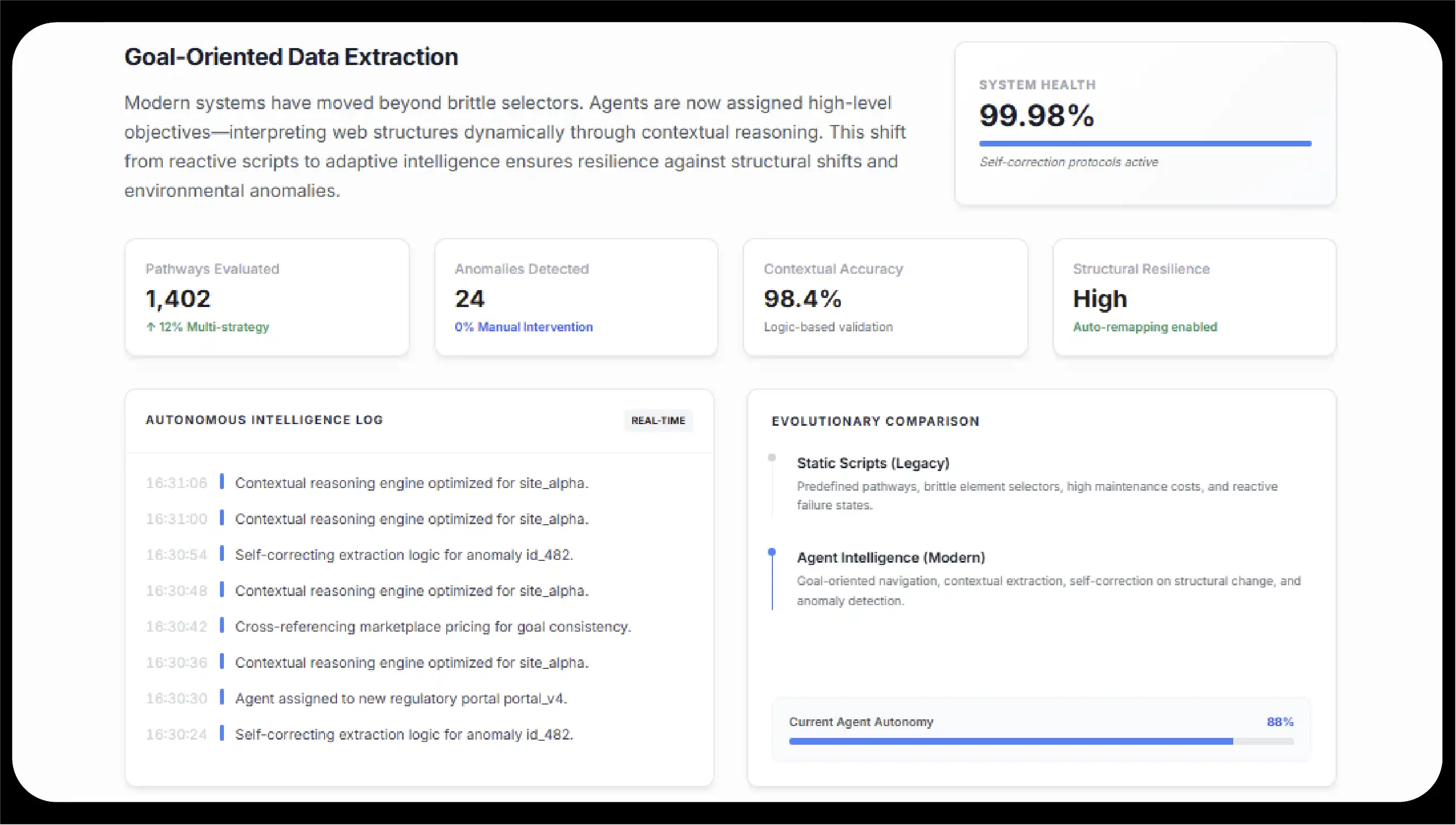

AI agents introduce a fundamentally different paradigm: agent-based web scraping. Rather than following fixed rules, these systems are assigned goals—such as “collect competitor pricing across marketplaces” or “aggregate regulatory updates from government portals.” The agent then interprets the webpage, navigates dynamically, and extracts relevant information based on contextual reasoning.

This shift illustrates the broader future of web scraping with AI, where data extraction becomes adaptive rather than reactive. Agents evaluate multiple strategies, detect anomalies, and self-correct when encountering unexpected structures. Instead of breaking when a selector changes, they reassess the page and identify alternative pathways.

The following table presents benchmark-based modeled performance comparisons across operational parameters observed in enterprise deployments.

| Performance Metric | Traditional Script-Based Scraper | AI Web Scraper | % Improvement with AI Agents | Business Impact |

|---|---|---|---|---|

| Average Setup Time per New Website | 12–20 hours | 4–8 hours | 60% faster | Faster onboarding of new data sources |

| Maintenance Frequency (Monthly Fixes) | 6–10 updates | 1–3 adaptive recalibrations | 70% reduction | Lower engineering overhead |

| Failure Rate After Website UI Change | 40–65% | 8–15% | 75% reduction | Improved reliability |

| Data Accuracy (Structured Output) | 85–92% | 95–99% | 6–10% gain | Cleaner analytics pipelines |

| Scalability Across Domains | Linear resource growth | Elastic scaling | 50–80% cost efficiency | Better ROI at scale |

| Real-Time Monitoring Capability | Limited | Continuous adaptive crawling | High | Competitive advantage |

| Contextual Data Interpretation | Minimal | Advanced NLP classification | Significant | Insight-driven extraction |

| Automation Level | Rule-dependent | Semi-autonomous to autonomous | High | Reduced manual supervision |

| Multi-Page Navigation Intelligence | Hardcoded paths | Dynamic decision-making | Substantial | Complex workflow handling |

| Error Recovery | Manual intervention required | Self-correcting logic | High resilience | Operational continuity |

These benchmarks illustrate how AI agents reduce operational fragility while improving performance consistency.

AI scraping agents extend beyond automation. Their strength lies in reasoning, contextual awareness, and adaptive execution.

Goal-Driven Intelligence

Unlike scripts that follow explicit instructions, AI agents operate on outcome-based logic. Whether performing trend analysis, sentiment monitoring, product catalog tracking, or AI enhanced digital shelf monitoring, they align extraction activities directly with defined business objectives.

Adaptive Learning

Through reinforcement learning and pattern recognition, agents refine their performance over time. They identify reliable structures, detect recurring patterns, and optimize navigation strategies. This capability enables scalable and resilient autonomous web scraping across diverse industries.

Contextual Understanding

Modern systems increasingly leverage semantic models and large language models to interpret page content. This allows for categorization, summarization, and classification directly within the scraping workflow. Such contextual reasoning is particularly valuable in applications like AI agents for price intelligence, where accurate interpretation of discounts, bundles, and dynamic pricing structures is essential for competitive analysis.

Human–Agent Collaboration

AI agents operate best within a supervised framework. Human oversight ensures compliance, validates anomalies, and refines extraction strategies. This collaborative structure balances automation with accountability, especially in use cases such as AI agents for lead generation scraping, where data accuracy, relevance, and ethical considerations directly impact business outcomes.

Cross-Domain Scalability

Whether tracking thousands of SKUs, monitoring financial disclosures, or aggregating research publications, agents can scale without linear increases in technical workload. This scalability supports enterprise-level intelligence initiatives such as Enterprise AI data intelligence.

Industry Use Case Performance Data

AI agents are transforming web scraping from a technical tool into a business intelligence engine. The following table outlines real-world modeled deployment scenarios across industries.

| Industry Sector | Use Case | AI Capability Applied | Monthly Data Volume Processed | Efficiency Gain | Strategic Outcome |

|---|---|---|---|---|---|

| Retail & E-commerce | AI-enhanced digital shelf monitoring | Visual recognition + NLP | 2–5 million SKUs | 65% faster updates | Improved brand compliance |

| Retail & Marketplaces | AI agents for price intelligence | Dynamic pricing detection | 10M+ price points | 50% quicker price adjustments | Revenue optimization |

| B2B Sales | AI agents for lead generation scraping | Behavioral signal detection | 500K prospects | 70% prospecting automation | Increased sales pipeline velocity |

| Finance | Regulatory document tracking | Document summarization | 200K filings | 60% faster compliance review | Risk mitigation |

| Travel & Hospitality | Competitor rate monitoring | Multi-site adaptive crawling | 3M+ listings | 55% operational savings | Dynamic pricing accuracy |

| Manufacturing | Supplier intelligence gathering | Structured data harmonization | 1M+ supplier records | 45% sourcing efficiency | Supply chain resilience |

| Academic Research | Public data aggregation | Semantic clustering | 5TB annual data | 50% faster compilation | Accelerated research timelines |

| Real Estate | Property listing aggregation | Contextual classification | 2M+ listings | 40% analysis time saved | Better market forecasting |

| Media Monitoring | Content aggregation & sentiment | NLP sentiment scoring | 15M articles | 68% faster reporting | Brand reputation tracking |

| SaaS & Tech | AI-agent-based competitor monitoring | Feature comparison modeling | 100K product updates | 60% faster insight generation | Competitive positioning |

These data-driven comparisons show how AI agents elevate scraping into strategic intelligence generation.

An advanced AI web scraper typically integrates multiple components:

This multi-layered design enables resilient and adaptive extraction workflows.

AI agents empower businesses through:

Organizations increasingly demand Custom AI scraping solutions tailored to industry-specific goals. At scale, enterprises leverage integrated AI web scraping services to maintain compliance, infrastructure stability, and analytics readiness.

Despite significant benefits, AI-based scraping requires responsible oversight.

Responsible governance ensures that automation enhances value without increasing risk.

The roadmap toward smart web scraping AI agents 2026 includes deeper reasoning capabilities, enhanced contextual awareness, and improved integration with enterprise ecosystems.

We are witnessing the early stages of fully adaptive data systems capable of blending robotic process automation, semantic understanding, and predictive analytics.

As organizations expand digital intelligence strategies, they increasingly adopt AI web scraping services that deliver scalable, compliant, and enterprise-ready datasets.

AI agents are redefining the data extraction landscape. What was once rule-bound scripting is now evolving into adaptive, reasoning-driven intelligence systems. The growth of AI-agent-based competitor monitoring signals a transformation toward contextual, scalable, and business-aligned automation.

Future advancements in LLM-powered web scraping will significantly enhance contextual reasoning and dynamic decision-making across complex web environments.

Continued innovation in Machine learning web scraping frameworks will reduce extraction errors and improve structural pattern recognition.

Enterprises will adopt modular architectures supported by an AI data scraping API to seamlessly connect extraction pipelines with analytics and business intelligence platforms.

The transition toward intelligent agents represents more than automation—it represents a new foundation for data strategy. Businesses that embrace adaptive systems today will be best positioned to lead in the era of intelligent, scalable, and insight-driven web intelligence.

Experience top-notch web scraping service and mobile app scraping solutions with iWeb Data Scraping. Our skilled team excels in extracting various data sets, including retail store locations and beyond. Connect with us today to learn how our customized services can address your unique project needs, delivering the highest efficiency and dependability for all your data requirements.

We start by signing a Non-Disclosure Agreement (NDA) to protect your ideas.

Our team will analyze your needs to understand what you want.

You'll get a clear and detailed project outline showing how we'll work together.

We'll take care of the project, allowing you to focus on growing your business.